AI Music Production Tools 2026: Cutting Friction in Workflows

I watched a producer friend of mine spend three hours last month trying to extract a vocal from a stereo mix.

He tried EQ carving and phase‑inversion hacks he’d picked up from YouTube over the years, pulling the vocal up by a few decibels here, taking the drums down a few decibels there.

He finally gave up, muted the original track, and rebuilt the entire instrumental from scratch around the vocal he’d managed to salvage.

That’s three hours of creative energy burned on something that was never going to change the emotional arc of the track.

As more AI tools enter the music production world, these kinds of stories are becoming more common, not less.

We’re promised “instant masters,” “one‑click remixes,” and full songs in 30 seconds. But for many working producers, more software hasn’t meant more finished music. It’s just meant more decisions, more options, and—if we’re honest—more creative fatigue.

In 2026, the most interesting AI music tools are not the ones promising to replace producers.

They’re the ones that cut the friction around the parts of the process we secretly hate, and then stop at the point where creative judgment begins.

Let’s unpack what that looks like in a modern workflow.

What Creative Fatigue Really Costs Producers Inside the DAW

Every producer knows the difference between good tired and bad tired.

Good tired is walking away from a session after three hours of tweaking the snare, knowing the groove finally hits right.

Bad tired is realizing you’ve spent the same three hours trying to clean up a vocal that should have been re‑recorded, or troubleshooting a phase problem on a reference track you only needed for 16 bars.

In 2026, producers have more tools than ever.

Surveys from major platforms show that while a large majority of artists now use some form of AI in their process, most still finish roughly the same number of tracks per year as they did before. The bottleneck hasn’t moved; it has just become more obvious.

Talk to working producers and you hear the same pain points:

-

Endless sound selection and preset scrolling.

-

Rebuilding the same basic drum patterns and chord shapes from scratch.

-

Technical chores—like stem separation, noise reduction, or loudness matching—that eat into limited studio time.

The result is a specific kind of creative fatigue.

You’re not burned out from making too many decisions that matter. You’re burned out from making too many decisions that don’t.

This is where AI can actually help—if you use the right tools for the right jobs.

AI Music Production Tools in 2026 – Utility vs Sketchpads

When producers say “AI music tools” in 2026, they are usually talking about two very different categories of software.

Telling them apart is the first step in deciding what belongs in your workflow.

Utility AI Tools – Compressing the Technical Work

Utility tools are AI‑powered versions of tasks you were already doing by hand, only slower and with more swearing.

Typical examples include:

-

AI stem separation tools that pull vocals, drums, bass, and other instruments out of a stereo file with far fewer artifacts than the old EQ and phase‑inversion tricks.

-

AI mastering assistants that give you a quick, consistent starting point for loudness and tonal balance, so your reference playlist stops jumping 6 dB every time you switch tracks.

-

AI MIDI tools that suggest chord progressions, basslines, and rhythmic ideas based on what you’ve already sketched, helping you avoid the “blank piano roll” stare‑down.

For example, professional stem separation tools like Get Stems let you split a finished song into up to six separate instrument tracks—vocals, drums, bass, and more—for remixing and detailed production work. For a full comparison of AI stem splitters, extenders, and vocal removal in 2026, see our best AI stem splitter and extender guide.

Used well, utility tools shave minutes or hours off jobs that don’t really require taste.

They clean the road, but they don’t decide where you’re going.

AI Music Sketchpad Tools – Generating Ideas, Not Finished Songs

The second major category is AI music sketchpad tools.

Instead of promising a finished, radio‑ready track, they generate short, structured musical ideas—loops, sections, stems, and MIDI—that you can drag into your DAW and reshape into your own sound.

You might already use chord plugins like Scaler or Cthulhu to get unstuck harmonically. AI sketchpads are the next step.

Rather than suggesting a single progression, they propose multi‑track moments—drums, bass, chords, and lead elements interacting together—so you’re reacting to music, not just theory on a grid.

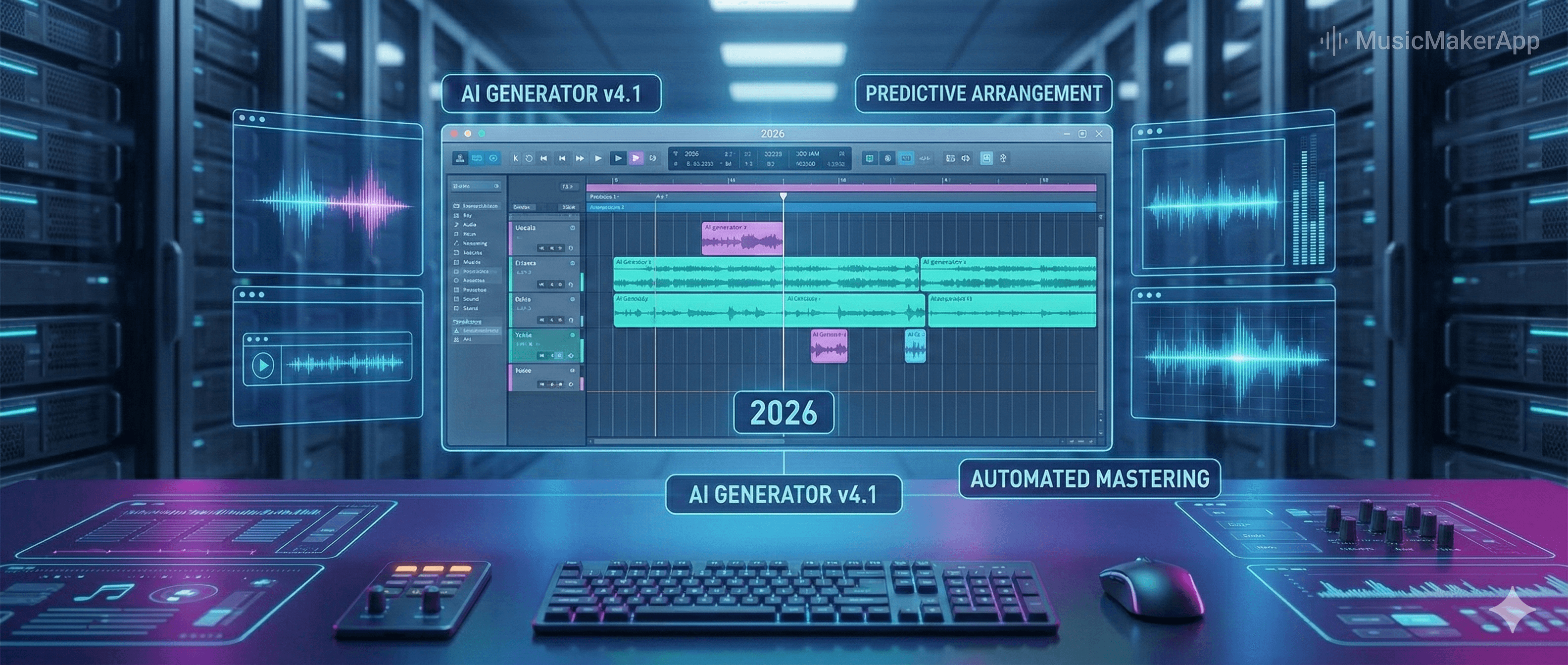

MusicMakerApp is one example of this sketchpad approach. It is a browser‑based AI music maker that generates royalty‑free stems and MIDI you can arrange and re‑voice inside your DAW, instead of locking you into a one‑click “AI song” preset.

This distinction matters.

Full‑song generators often compress the wrong part of the workflow. They rush you through structure and sound design—the fun, creative parts—and leave you with a track that’s emotionally flat and sonically locked.

Sketchpads flip that equation.

They compress the setup—the blank grid, the first eight bars—and then get out of the way so you can do the work that actually makes a record yours.

The Workflow Bridge – From AI Generation to Real Music Production

The most interesting thing I’ve seen in 2026 sessions is not that producers are “letting AI make songs.”

It’s that they’re using AI to bridge the hardest gap in the process: from vague idea to something you can actually arrange, edit, and mix.

A modern AI‑assisted music workflow doesn’t look like clicking one button and exporting a master.

It looks more like a relay race between AI tools and the DAW.

A Typical 2026 AI Music Production Workflow

A realistic AI‑assisted workflow in 2026 often looks like this:

-

Set a musical direction in an AI music sketchpad Choose mood, tempo, and style, then generate a few short sections or loops. Maybe you ask for a “warm 120 BPM late‑night groove with soft keys and subby bass” and audition three or four different takes on that brief.

-

Export stems and MIDI Bounce individual drums, bass, chords, and melodic parts as WAV, plus MIDI files for the musical content you want to re‑voice. Clean 24‑bit WAV stems and standard MIDI make it easy to integrate into any modern DAW.

-

Import everything into your DAW Pull the stems and MIDI into Ableton, FL Studio, Logic Pro, or whatever you use. Route each part through your own synths, samplers, and plugin chains. At this point, it stops looking like “AI output” and starts looking like any other session.

-

Mute, rewrite, and humanize Aggressively remove what you don’t need, rewrite key lines, and add performance, automation, and texture so the track sounds like you. Automation curves, micro‑timing, and sound selection are where your personality shows up.

-

Finish with your usual mix and master chain Treat AI‑generated parts the way you would treat stems from a collaborator or a sample pack: useful starting points, never commandments. You still make all the balancing, EQ, compression, and saturation decisions.

Tools like MusicMakerApp’s Creation Lab already expose this as a set of structured AI music creation flows—step‑by‑step templates that connect prompts, generation, and export into a complete workflow you can rely on session after session.

Mind the Licensing and Rights

There’s one very non‑musical detail that becomes musical the moment you want to release a track: rights.

Across almost every serious platform in 2026, full commercial rights for AI‑generated stems and MIDI live behind paid tiers rather than free plans.

Treat AI output the way you treat sample packs: read the license once before you release. For costs, ownership, and a compliance checklist for AI‑generated albums, see our AI album licensing 2026 guide.

Platforms built for professional use, like MusicMakerApp, explicitly frame their AI output as copyright‑safe, royalty‑free building blocks for commercial projects, so you can focus on the music instead of worrying about clearance emails later.

Why AI in Music Production Still Needs You

A lot of headlines in 2026 still ask whether AI will replace producers.

From where I’m sitting in real sessions, the answer is simpler and less dramatic: AI is replacing the parts of production that never required a producer in the first place.

It’s very good at:

-

Cleaning up technical problems.

-

Generating plausible harmonic and rhythmic patterns.

-

Filling in gaps when you’re too tired to program another hi‑hat variation.

It is much worse at:

-

Knowing when a “correct” chord progression is emotionally wrong for the story you’re trying to tell.

-

Deciding that the chorus should arrive eight bars later so the listener has time to breathe.

-

Hearing that a slightly out‑of‑time bass note is the most human moment in the whole track.

As AI flattens the technical gap between beginners and professionals, the only durable advantage left is creative judgment—not who owns the most plugins, but who can hear when a chord progression is technically correct but emotionally wrong.

By 2026, the producers who build lasting careers are not the ones who let AI run the whole show.

They are the ones who use AI to compress the boring parts, then rely on their own taste to decide what stays, what goes, and when the track finally says what it needs to say.

FAQ – AI Music Production Tools & Workflows in 2026

1. What are the main types of AI music production tools in 2026?

They broadly fall into two buckets: utility tools (AI stem separation, AI mastering, AI MIDI assistants) that speed up technical tasks, and AI music sketchpad tools that generate loops, sections, stems, and MIDI you can arrange inside your DAW.

2. How are producers using AI sketchpads without sounding generic?

They use AI for the first 8–16 bars—getting from a blank session to a workable idea—then mute, replace, and rewrite parts. The final arrangement, sound design, and mix decisions still come from their own ears, not from a preset.

3. Does AI‑generated music actually matter for listeners in 2026?

Yes, but mostly as part of a larger ecosystem. Surveys from major firms show that a significant share of 18–44‑year‑olds listen to AI‑generated music for several hours each week, yet AI tracks still represent under 1% of total streams. Discovery and promotion remain mostly human‑driven.